This is Part 2 of “The Search Tax.” Part 1 laid out what’s structurally wrong with ecommerce search. This part looks at why vendor promises are making it worse.

Everyone claims AI. Nobody explains it.

Open any ecommerce search vendor’s website and count how many times you see “AI-driven,” “AI-powered,” or “AI-first.” It’s on every homepage, every pitch deck, every case study headline.

Now try to find a single explanation of what the AI actually does.

Not what it promises. Not what outcomes it allegedly delivers. What it mechanically does — what data it trains on, what decisions it makes, what happens when it gets something wrong.

That search usually ends in a contact form.

This isn’t pedantry. It’s a practical problem with real consequences. If you can’t explain what your AI does, you can’t debug it when it fails. You can’t audit its decisions. You can’t distinguish a genuine improvement from a statistical anomaly. You’re trusting a system you can’t inspect, sold to you by people who can’t — or won’t — describe it.

The word “AI” in ecommerce search has become what “organic” became in food marketing: technically defensible, practically meaningless, and profitable precisely because nobody demands a definition.

The roadmap sale

There’s a pattern in B2B SaaS that search managers know intimately but rarely talk about publicly: the vendor sells the roadmap.

It works like this: the product as it exists today has limitations. The vendor knows this. So the sales pitch focuses on what’s coming — the features in development, the AI capabilities “rolling out in Q3,” the integration that’s “almost ready.”

You sign the contract based on a future product. Six months later, Q3 becomes Q1 of next year. The integration is “in beta.” The AI feature works, sort of, but requires manual tuning that nobody mentioned during the demo.

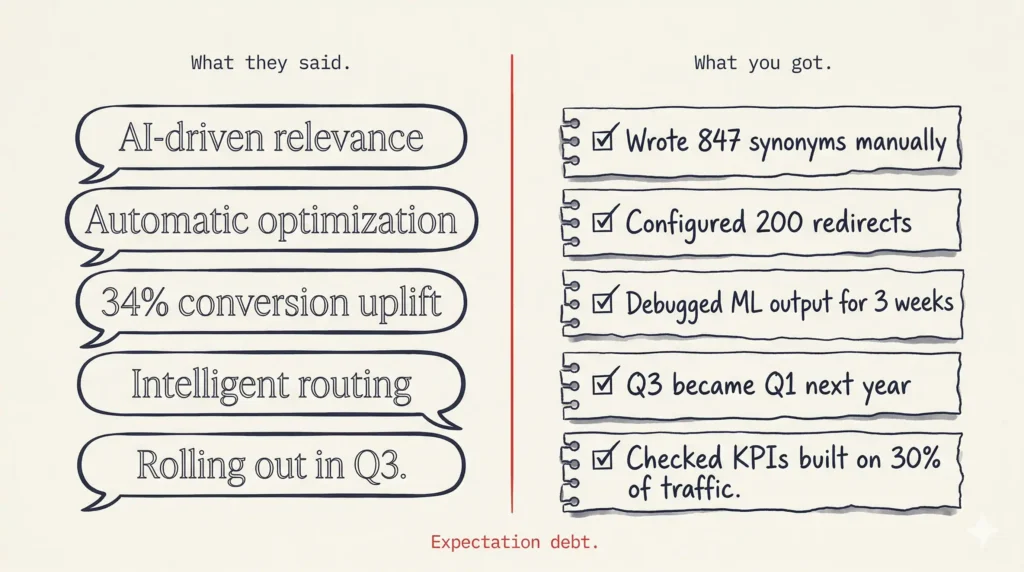

Meanwhile, your team adapts. They build workarounds. They write the synonyms the AI was supposed to handle. They configure the redirects the “intelligent routing” was supposed to manage. And gradually, the vendor’s unfulfilled promise becomes your team’s unpaid labor.

The search tax here is expectation debt. You paid for a future that didn’t arrive, and now you’re subsidizing the gap with human effort. The vendor’s revenue is unchanged. Your team’s workload increased.

“Our customer achieved X% uplift”

Case studies in ecommerce search follow a formula: customer name, impressive percentage, vague attribution to the vendor’s technology.

“Retailer X increased conversion by 34% with our AI-powered search.”

That sentence appears, in various forms, across dozens of vendor websites. And it tells you almost nothing.

What was the baseline? What else changed during the measurement period? Was it an A/B test or a before-and-after comparison? Was the “uplift” measured over a week, a month, a quarter? Did seasonal trends play a role? What exactly did the technology do — mechanically — that caused the change?

Without the mechanism, the number is a decoration. It’s a claim that sounds like proof but functions like marketing. And search managers know this. They’ve seen the same percentage attributed to three different vendors for the same customer in the same year.

Real proof follows a structure: Problem — what was broken. Mechanism — what specifically changed. Result — what measurably improved, over what period, verified how. If a case study skips the middle step, it’s not proof. It’s a press release.

The “automatic” illusion

“Our search optimizes automatically.”

This claim is on more vendor websites than any other. And it might be the most expensive lie in ecommerce search.

Here’s what “automatic” usually means in practice: the system has a machine learning component that adjusts some parameters based on signals. Which parameters? Unclear. Which signals? “User behavior” — a term broad enough to mean anything. What happens when the adjustment goes wrong? Roll back manually. How do you know it went wrong? Check your KPIs. Which KPIs? The ones built on the 30% of traffic you can actually track.

The word “automatic” in search technology has become a permission slip for vendors to stop explaining how their product works. It shifts the burden of understanding from the seller to the buyer. And when the automatic system produces bad results, the vendor’s response is predictable: “Have you checked your configuration?”

Automatic should mean: the system does something specific, you can see what it did, and you can verify whether it worked. If “automatic” means “we can’t tell you what it does but trust us,” that’s not automation. That’s opacity with a subscription fee.

Why specificity matters more than claims

The difference between a credible vendor and a performative one is one thing: specificity.

A performative vendor says: “Our AI improves search relevance.”

A credible vendor says: “Our system maps 5,000 variants of ‘boxspringbett’ to a single purchase intent by tracking which queries led to clicks, basket adds, and purchases — not by matching keywords, but by matching behavior to outcomes.”

The first is a claim. The second is a mechanism. The first asks you to trust. The second asks you to verify.

Search managers live in a world of specifics. They know what a synonym rule does. They know what a redirect does. They can explain, query by query, why certain results appear. When a vendor can’t match that level of specificity about their own technology, it’s a red flag — not because the technology is necessarily bad, but because the vendor either doesn’t understand it or doesn’t want you to.

The search tax here is evaluation cost. Every hour spent decoding vague vendor claims is an hour not spent on actual search improvement. And the vendors who are hardest to evaluate are usually the ones who need the ambiguity most.

The trust gap

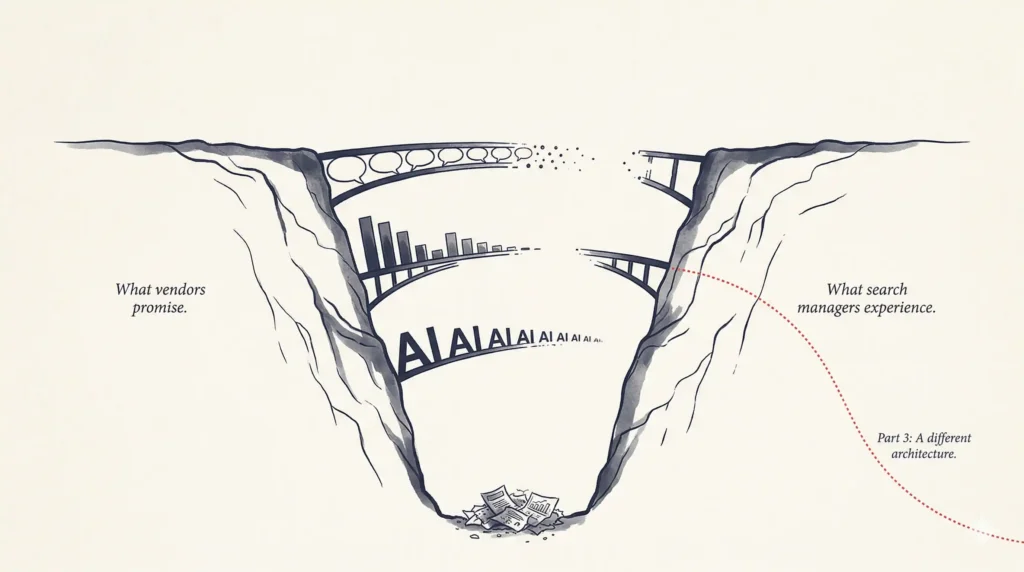

There’s a growing gap in ecommerce search between what vendors promise and what search managers experience.

The vendors promise intelligence, automation, and uplift. The search managers experience manual grind, opaque systems, and metrics they can’t verify. The vendors speak in outcomes. The search managers live in mechanisms.

This gap isn’t just inconvenient. It’s corrosive. It makes search managers skeptical of genuine innovation because they’ve been burned by artificial claims. It makes them reluctant to test new tools because the last “revolutionary” solution created more work, not less. And it keeps the industry trapped in a cycle where real progress is indistinguishable from marketing noise.

Editorial illustration of a deep canyon labeled “What vendors promise” on one side and “What search managers experience” on the other. Multiple bridges across the gap are broken and incomplete, symbolizing failed AI implementations.

Breaking that cycle requires one thing vendors are remarkably reluctant to provide: transparency. Show the mechanism. Explain the data. Let the customer audit the logic. And if the results speak for themselves, let the results speak — without inflating them with adjectives that do nothing but trigger skepticism.

In Part 3, we’ll look at what it actually takes to stop paying the search tax — not with another set of promises, but with a different architecture.